Why AI Hallucinates When You Underwrite (And How to Fix It)

There’s a persistent misconception in commercial real estate that AI errors are random. In reality, most issues stem from how inputs are structured. If you want to prevent AI underwriting hallucinations CRE, you need to understand that AI doesn’t actually “hallucinate” the way people think it fills gaps when assumptions are missing. Small prompt changes can eliminate most underwriting errors entirely.

If you’re using tools like Manus, Claude, or NotebookLM for deal analysis, this becomes critical. Because the moment you leave assumptions undefined, the AI fills in the gaps. And that’s where things break.

The Venice Deal Test: What Actually Happened

Let’s ground this in a real scenario. I ran a live underwriting test using Manus desktop on a 14-unit multifamily deal in Venice, California. The dataset included:

-

Profit & Loss statements

-

Rent roll

-

ADU plans

-

Lease agreements

-

Property photos

Then I gave a simple instruction:

“Build me a highly detailed, professional, persuasive offering memorandum.”

The output?

-

Clean structure

-

Strong narrative

-

Professional formatting

-

Complete deck (16 pages)

At first glance, it looked perfect. But there was one problem.

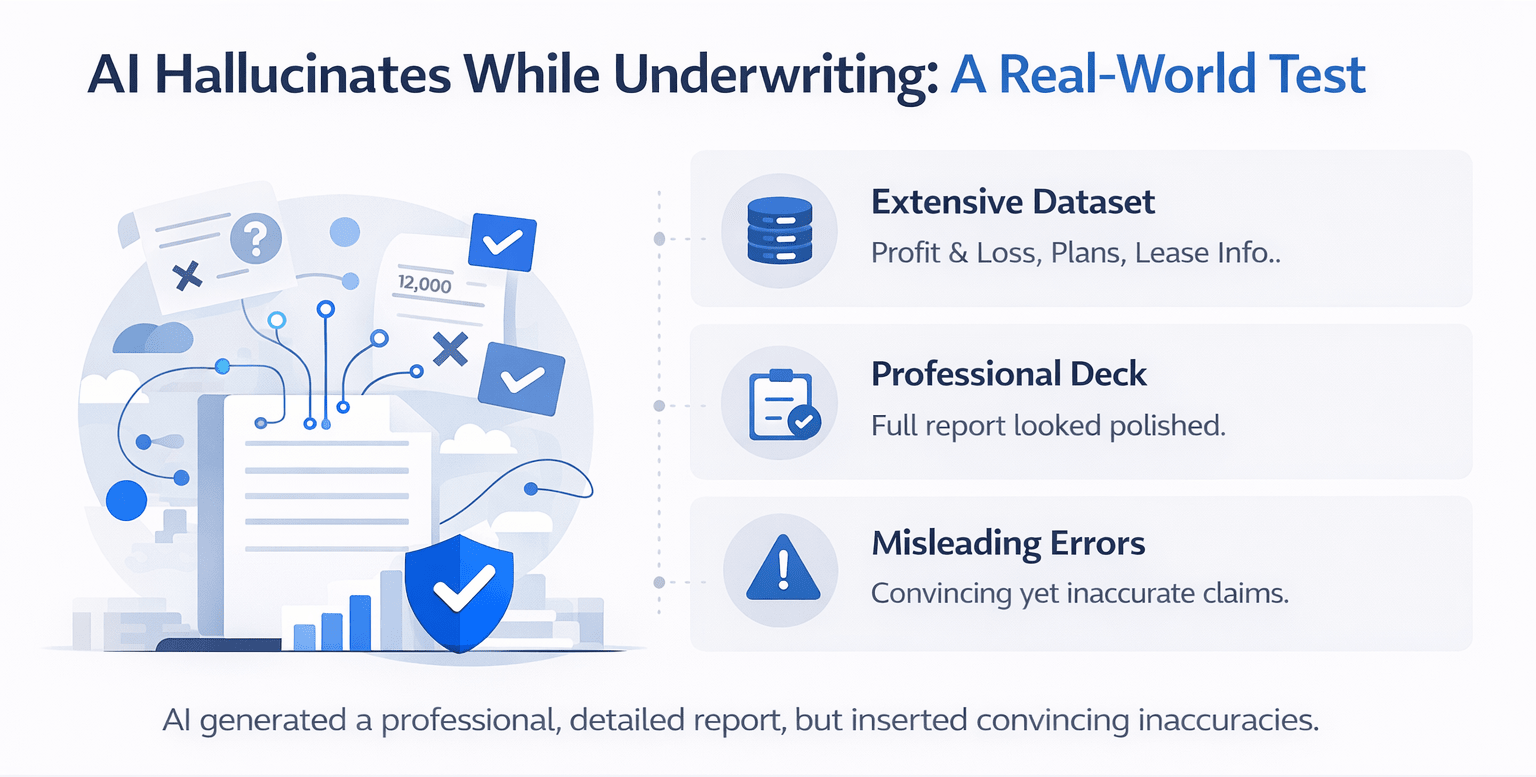

The Hidden Error Most People Miss

The model made a critical assumption on its own. I never specified:

-

Rent growth rate

-

Expense growth

-

Vacancy

-

Exit cap

So the AI pulled external data and made decisions independently.

That means:

-

It could have used outdated comps

-

It could have referenced biased sources

-

It could have blended multiple assumptions inconsistently

And most importantly:

It didn’t tell me which assumptions it guessed. This is the core issue behind preventing AI underwriting hallucinations in CRE workflows.

What “AI Hallucination” Actually Means in CRE

Let’s clarify something. AI doesn’t randomly invent numbers in underwriting.

Instead, it:

-

Fills missing variables

-

Infers based on patterns

-

Pulls generalized market data

-

Optimizes for completion, not accuracy

So what people call hallucination is actually:

A missing input problem, not an AI problem. To understand where AI starts making incorrect assumptions, it helps to revisit how commercial real estate underwriting explained frameworks define core deal variables and risk inputs.

Where Underwriting Breaks Without Guardrails

Here are the exact areas where AI starts making decisions if you don’t control it:

| Underwriting Variable | What AI Does Without Input | Risk Level |

|---|---|---|

| Rent Growth | Pulls market averages | High |

| Expense Growth | Assumes inflation trends | Medium |

| Vacancy Rate | Uses generic benchmarks | High |

| Exit Cap | Infers from comps | Very High |

| Loan Terms | Guesses typical financing | High |

| Hold Period | Defaults to common timelines | Medium |

The Real Problem: You Didn’t Define the Model

Think about how you train a junior analyst.

You wouldn’t say:

“Here’s a rent roll. Go underwrite the deal.”

You would first define:

-

Your house assumptions

-

Your return targets

-

Your underwriting standards

AI works the same way. Without that context, it builds its own model. And that model is almost never aligned with your firm.

The Fix: Lock Assumptions Before You Prompt

This is the highest ROI change you can make.

Instead of:

“Underwrite this deal.”

You use:

Underwrite this deal using the following assumptions:

• Rent growth: 3% annually

• Expense growth: 3% annually

• Vacancy: 5%

• Management fee: 3% of EGI

• Reserves: $300/unit/year

• Exit cap: going-in cap + 50 bps

• Hold period: 5 years

• Target IRR: 16%

Now the AI:

-

Stops guessing

-

Aligns with your strategy

-

Produces consistent outputs

This is how you prevent AI underwriting hallucinations at scale.

Before vs After: Controlled vs Uncontrolled AI Output

| Scenario | AI Behavior | Output Quality |

|---|---|---|

| No Assumptions | AI guesses everything | Inconsistent |

| Partial Inputs | Mixed assumptions | Unstable |

| Full Assumptions Defined | AI executes instructions | Reliable |

Why Most Prompt Engineering Advice Fails

There’s a lot of noise around AI prompts:

-

“Act like a senior analyst.”

-

“Use chain-of-thought reasoning”

-

“Write step-by-step logic”

Most of it doesn’t matter for CRE underwriting. Because the real issue isn’t how you phrase the task. It’s what inputs you provide.

What Actually Moves the Needle

Instead of fancy prompts, focus on:

-

Defined variables

-

Structured inputs

-

Consistent templates

-

Repeatable workflows

AI performs best when:

The problem is clearly constrained. The inputs are complete. The expectations are fixed

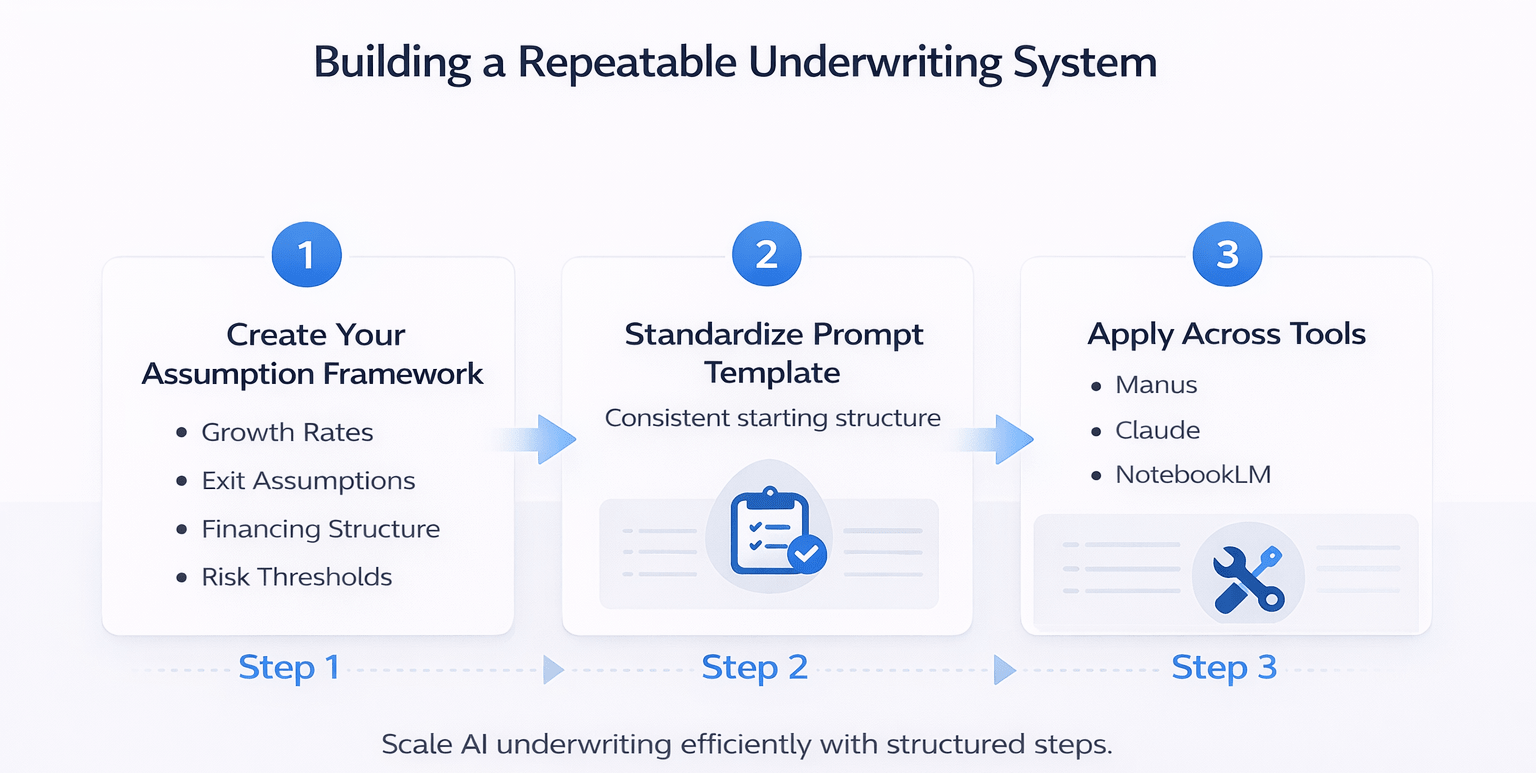

Building a Repeatable Underwriting System

If you want to scale this, don’t rewrite prompts every time. Build a system.

Step 1: Create Your Assumption Framework

Document your:

-

Growth rates

-

Exit assumptions

-

Financing structure

-

Risk thresholds

Step 2: Standardize Your Prompt Template

Every deal starts with the same structure.

Step 3: Apply Across Tools

Whether you use:

The principle stays the same.

Common Mistakes That Cause AI Errors

Avoid these:

-

Vague prompts

-

Missing financial assumptions

-

Over-reliance on AI research

-

Not verifying outputs

-

Mixing deal strategies

The Bigger Insight: AI Is an Analyst, Not an Oracle

AI doesn’t “know” the right answer.

It:

-

Predicts

-

Estimates

-

Completes patterns

So the better way to think about it is:

AI is a junior analyst with infinite speed but zero context. You still need to provide the context. This example shows how AI behaves when treated like an analyst instead of a black box tool:

How This Changes CRE Workflows

Once you fix this:

-

Underwriting becomes faster

-

Outputs become consistent

-

Teams trust AI outputs more

-

Errors drop dramatically

And most importantly:

You stop second-guessing the model

Build Smarter CRE AI Workflows

If you’re serious about improving underwriting accuracy, you need more than tools; you need systems. The difference between inconsistent outputs and reliable deal analysis comes down to how you structure your workflows, not which AI you use.

Join the AI for CRE Collective and learn directly from 600+ CRE professionals who are building real-world AI systems for underwriting, acquisitions, and deal execution. If you want proven prompts, workflows, and templates, subscribe to the newsletter and start implementing what actually works.

Conclusion

AI hallucinations in CRE underwriting aren’t random failures.

They’re predictable outcomes of missing inputs.

Once you understand that, the solution becomes simple:

-

Define your assumptions

-

Standardize your prompts

-

Treat AI like an analyst

Do that consistently, and you’ll prevent AI underwriting hallucinations CRE across every deal you run.

FAQs Regarding Preventing AI Underwriting Hallucinations CRE

1. What causes AI underwriting hallucinations in commercial real estate?

AI underwriting hallucinations occur when key assumptions are missing or unclear in the input prompt, forcing the model to fill gaps with inferred or external data.

-

AI defaults to generalized market trends

-

It may pull outdated or inconsistent data sources

-

It does not flag which assumptions were guessed

Conclusion: Most hallucinations are caused by incomplete inputs rather than errors in the AI model itself.

2. How can you prevent AI underwriting hallucinations in CRE?

You can prevent AI underwriting hallucinations CRE by explicitly defining all key deal assumptions before running the model.

-

Include rent growth, vacancy, and expense assumptions

-

Define exit cap rates and hold periods

-

Provide financing and return targets

Conclusion: Clear, structured inputs eliminate guesswork and significantly improve output accuracy.

3. Why does AI make incorrect assumptions during underwriting?

AI is designed to complete tasks, not stop when information is missing.

-

It predicts missing values based on patterns

-

It prioritizes generating a complete output

-

It lacks awareness of your firm’s specific assumptions

Conclusion: AI makes incorrect assumptions because it is optimized for completion, not for aligning with your internal underwriting standards.

4. What underwriting variables should always be defined in AI prompts?

Certain variables are critical and should never be left undefined.

-

Rent growth rate

-

Expense growth rate

-

Vacancy assumptions

-

Exit cap rate and hold period

-

Loan terms and reserves

Conclusion: Defining these variables ensures that AI outputs align with your investment strategy and risk profile.

5. Is AI reliable for commercial real estate underwriting?

AI can be reliable when used correctly, but it requires proper setup and oversight.

-

Works well for data extraction and structuring

-

Improves speed and consistency

-

Requires human validation for final decisions

Conclusion: AI is a powerful tool, but reliability depends on how well inputs and assumptions are controlled.

6. What is the difference between AI hallucination and missing input errors?

In CRE underwriting, hallucinations are often the result of missing inputs rather than true model errors.

-

Hallucination: AI generates incorrect or assumed data

-

Missing input: user failed to define key variables

-

AI compensates for incomplete instructions

Conclusion: Most hallucinations are actually input failures, not flaws in the AI system.

7. How do standardized prompts improve underwriting accuracy?

Standardized prompts create consistency across all deals.

-

Ensure all assumptions are included every time

-

Reduce variability in outputs

-

Enable repeatable workflows across teams

Conclusion: Standardization is key to scaling AI use without sacrificing accuracy.

8. Can AI replace human analysts in underwriting?

AI can assist analysts but cannot fully replace them.

-

Automates repetitive calculations and summaries

-

Speeds up deal analysis

-

Requires human judgment for strategy and risk

Conclusion: AI acts as an analyst assistant, not a replacement for decision-making expertise.

9. What are the risks of relying on AI without defined assumptions?

Using AI without clear assumptions can lead to inconsistent and misleading outputs.

-

Incorrect financial projections

-

Misaligned investment strategies

-

Hidden assumptions that go unnoticed

Conclusion: Lack of control over inputs creates risk in both analysis and decision-making.

10. How can CRE teams scale AI underwriting workflows effectively?

Scaling requires building structured systems rather than relying on one-off prompts.

-

Create a standard assumption template

-

Apply the same workflow across all deals

-

Continuously refine based on output quality

Conclusion: Scalable AI adoption comes from repeatable systems, not individual usage.